The Open-Weight Inflection: What 30 Days of Frontier Releases Mean for Enterprise AI

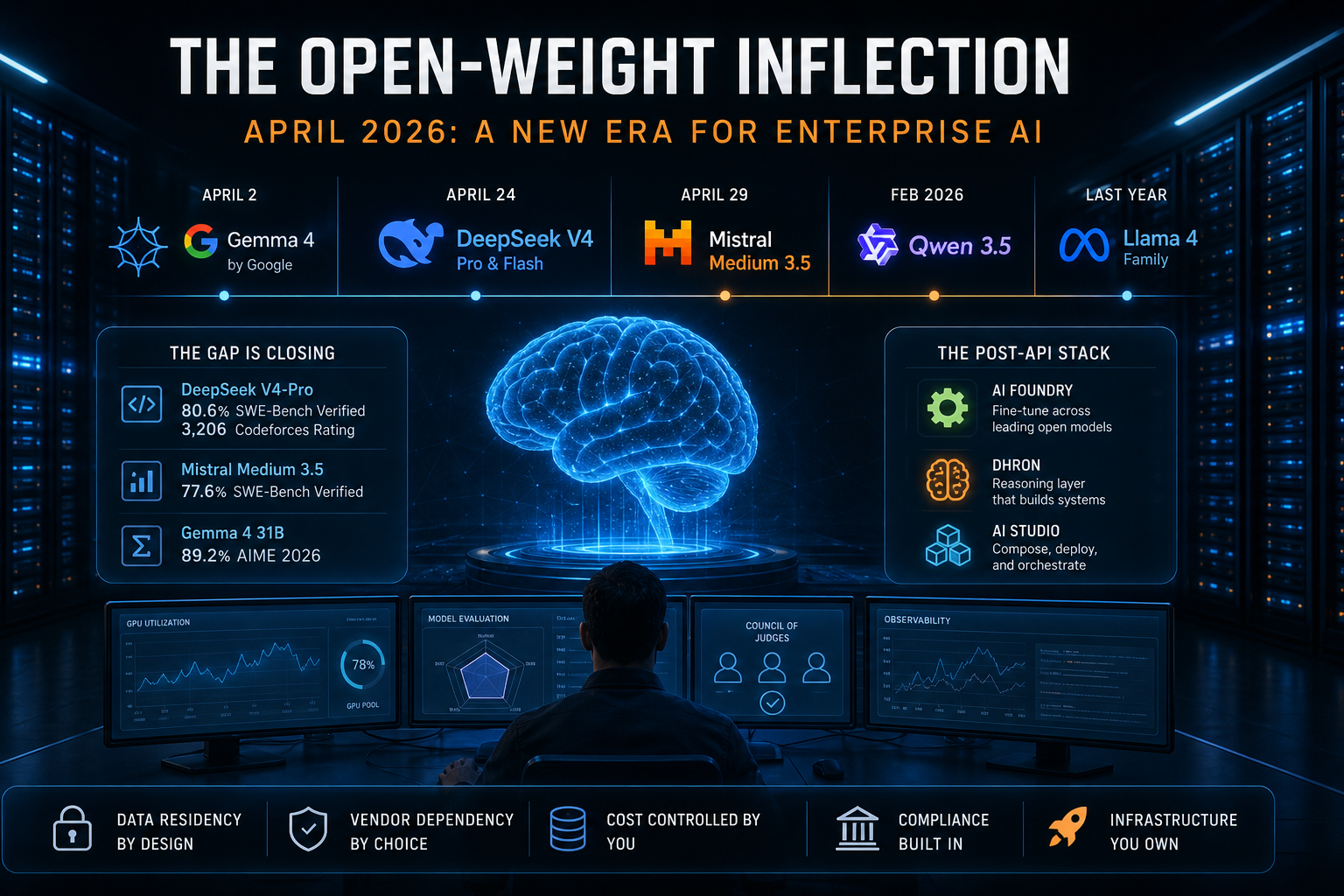

Three frontier-class open-weight models shipped in April 2026: Google's Gemma 4 on the 2nd, DeepSeek V4 (Pro and Flash) on the 24th, Mistral Medium 3.5 on the 29th. Add Qwen 3.5 from February and the Llama 4 family from last year, and the open-weight side of the frontier is more populated than at any point since serious LLMs existed.

The numbers worth quoting are the ones the model cards actually publish. DeepSeek V4-Pro hit 80.6% on SWE-Bench Verified and a Codeforces rating of 3,206 — the highest competitive-programming score any model had posted at release, open or closed. Mistral Medium 3.5 came in at 77.6% on SWE-Bench from a 128B dense model that self-hosts on four GPUs. Gemma 4's 31B scored 89.2% on AIME 2026 at a size that fits on a workstation. These are vendor-reported, and benchmark-to-production gaps are real, but the trend is hard to argue with: the capability gap between the best open-weight models and the closed frontier is now measured in single percentage points on most workloads, not whole categories.

For a CTO architecting an AI stack for the next year, that changes the math. From where I sit, running the backend at a company that bet entirely on self-hosted AI, this is the shift we've been building toward.

The capability tax is gone

Until recently, "sovereign AI" came with a real tradeoff. You ran an open model on infrastructure you controlled, and you accepted a noticeable quality gap in exchange for the privacy and control. That tradeoff was acceptable for banks, hospitals, defense, government — anyone for whom data residency was non-negotiable — but it was a tax.

The tax is now small enough to ignore on most enterprise workloads. You can run a model on your own GPUs that performs within striking distance of a closed API, and on coding and math benchmarks it occasionally beats one. When the gap collapses, the way an enterprise should architect its AI stack changes in a few specific ways.

Data residency stops being a fire drill. EU AI Act enforcement is ramping, India's DPDP Act is reshaping how enterprises handle data, and Middle East and Southeast Asian residency rules tighten quarterly — but none of those headlines trigger anything on your end if your inference already runs on infrastructure you control. Vendor dependency becomes a choice rather than an inheritance: a closed API is a single point of dependency on someone else's roadmap, pricing, and policy, and policy in particular has become harder to predict over the last year. Cost shifts from per-token billing to GPU utilization, which is a problem your CFO can model without surprises.

This is the case we've been making at DhronAI since we started: that the real unit of enterprise AI is the system, not the model, and systems need to be owned. The technical premise is now unambiguous in a way it wasn't six months ago.

What the post-API stack looks like

The next two years of enterprise AI will be defined by companies that own their stack end to end. Not because closed models are bad — they're excellent — but because once 90% of frontier capability is available on weights you fully control, the remaining 10% stops being worth the strategic dependency for any enterprise serious about its data, its uptime, and its margins.

This is how AI-Inicio is built. AI Foundry handles fine-tuning across Llama, Mistral, Qwen, DeepSeek, and Gemma, taking enterprise data and producing production-grade models. Dhron is the reasoning layer that turns those models into systems instead of just outputs. AI Studio is where agents and workflows get composed and deployed. All three layers run self-hosted on the customer's infrastructure, with no external API dependency in the path.

We didn't build it that way as a bet that open weights would catch up. We built it that way because we believed enterprise AI is a systems problem, and the April releases are making that bet pay off faster than I expected.

The actual backend work

The gate that decides what actually ships to a customer is what we call the Council of Judges. Three large LLM judges from different model families evaluate each candidate model against five dimensions: factual correctness against golden answers, schema and structured-output compliance, hallucinated or fabricated content, domain-specific business rules (financial calculations, ERP logic, and the like), and reasoning validity. Any one of the three can veto. We use different model families on purpose — three instances of the same model would share the same blind spots and miss the same kind of mistake. The same gate applies whether we're considering a base-model upgrade in a customer's production pipeline or fine-tuning one of our own specialists.

That's how NAZAR, our vision model, and KLV, our multilingual speech model, were built and validated. Both sit alongside the open-weight stack rather than competing with it. The April wave doesn't replace purpose-built models; it raises the floor of the general-purpose layer they plug into, and the Council is what makes sure neither side regresses when the floor moves.

Around that sit the unglamorous pieces: inference scheduling across heterogeneous GPU pools, quantization profiles per model family, and observability that surfaces drift the moment it shows up in production. None of this gets a launch post, but it is what enterprise AI customers are actually paying for.

What's coming

The next twelve months will compress a lot of what the closed-model labs took years to build. The longer an enterprise waits to start moving toward self-hosted, open-weight infrastructure, the more of its prompts, fine-tunes, eval rigging, and operational muscle end up living in someone else's environment — and the harder that gets to unwind.

The tools to do this well exist now, the models are good enough, and the economics work. The harder problem is the systems work that turns those models into infrastructure, and that's where most of the next year's interesting engineering will happen.

If your enterprise is thinking about its AI infrastructure for the next 12 months, we're happy to talk — thirty minutes, real conversation, no sales script.